Part 2: Basic CUDA

There’s a good first CUDA tutorial here: https://developer.nvidia.com/blog/even-easier-introduction-cuda/. Then there are the docs here: https://docs.nvidia.com/cuda/cuda-c-programming-guide/#.

The docs describe three levels of the thread hierarchy — thread blocks, thread block clusters, and grids. We will use only one thread block, because it seems easiest — in particular, sharing memory outside thread blocks seems difficult. This will limit the level of parallelism we’ll be able to achieve; a future version could try to use multiple thread blocks.

The current simulation code ( World::step ) looks like this:

for(int i = 0; i < GRID_HEIGHT; i++) {

(*other_grid)[i][0] = 1.0;

for(int j = 1; j<GRID_WIDTH; j++) {

(*other_grid)[i][j] = (*current_grid)[i][j-1];

}

}

This can be easily converted to a CUDA kernel:

using PlainCGrid = double*;

__global__

void cuda_step(PlainCGrid in, PlainCGrid out) {

for(int i = 0; i < GRID_HEIGHT; i++) {

out[i*GRID_WIDTH] = 1.0;

for(int j = 1; j<GRID_WIDTH; j++) {

out[i*GRID_WIDTH + j] = in[i*GRID_WIDTH + j-1];

}

}

}

void World::step() {

cuda_step<<<1, 1>>>(

reinterpret_cast<double*>(current_grid->_data.data()),

reinterpret_cast<double*>(other_grid->_data.data())

);

std::swap(other_grid, current_grid);

}

Now we’ll have to compile it, ideally not manually but by integrating it into the CMake project. A CUDA/CMake tutorial can be found here: https://developer.nvidia.com/blog/building-cuda-applications-cmake/

This is where I notice it doesn’t work; I just get some garbled triangles. The error is not with the C-style rewrite, as removing the __global__ and the <<<1,1>>> works perfectly (see commit 1b6d3b9d). Probably I am doing something wrong with CUDA memory management.

One problem is that we were using std::arrays , but now we need CUDA-managed memory, not “regular” memory. CUDA can’t interact with the “regular” memory stored in the std::array. All of the following do not work:

most C++ containers take as a template parameter an “allocator” which can allocate memory in non-standard ways. However std::array doesn’t (probably because it doesn’t actually allocate memory on the heap, but is just a wrapper around stack memory)

A

std::arrayalso cannot be constructed by providing the memory (probably for the same reason).I could use an

std::vector, but I want the size of the grid not to change after initialization, so using a resizable vector seems inelegant to me.In C++23, I could use

std::span, but I’m still targeting C++17I could write some custom class that is an array with managed memory, but I already have

Gridthat provides most of that functionality. So I’ll adapt theGridclass.

After adapting Grid, there’s another problem. The program flow (in main) looks like this: some memory is dynamically allocated by Grid, GLFW accesses that memory, then Grid is deleted before GLFW, the memory is freed, and we get a segfault. The short-term solution is to initialize Grid before GLFW. The long-term solution would be to use a smart pointer, or to use Rust, where that kind of thing can’t happen ;)

By c3173dd, after another trivial bug fix, this works and there’s again an unit-height wave coming from the right side. So everything works as it did before, but with CUDA. (Something that was helpful for the trivial bug fix: I can set the GRID_WIDTH and GRID_HEIGHT constant to 10 each and then print the grid to see what happens.)

Now I would like to have some animation in the visualization. The first step is to replace the for-loop in main (that only does 20 timesteps) with a while loop that runs forever. However, that breaks the program flow. The previous program flow was:

20 simulation iterations are performed and visualized at the same time.

mainexits and waits for the destructor ofMyGLFW, i.e. the visualization, which waits for the UI thread to exit.When the user presses Exit or closes the window, the UI thread exits and the program stops.

Now we need a more complex flow, which can handle both the user closing the UI thread, the main thread getting an interrupt signal, or the main thread exiting for any other reason. My solution looks like this:

The UI (

class MyGLFW) has a state that can beRUNNINGorKILLED(i.e. the user wants to close the window but it hasn’t been closed yet). The window is started in the constructor and closed in the destructor, so there’s no other state (if theMyGLFWexists, then it has a GLFW window somewhere).The state can be accessed by both threads, and is protected by a mutex. When the UI thread wants to exit, it sets the state to

KILLED. When the main thread calls the destructor, the state is also set toKILLED.On the other side, the UI thread regularly checks the state. If it’s killed, it exits the render loop. Whenever the main loop tries to render something and the UI has been killed, this raises an exception (which is caught but breaks the main loop).

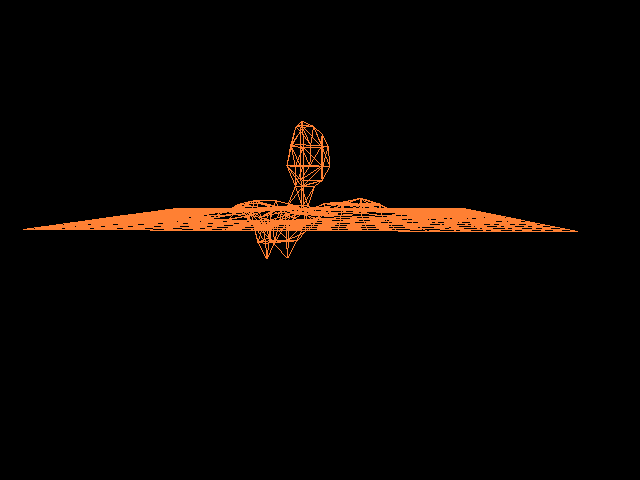

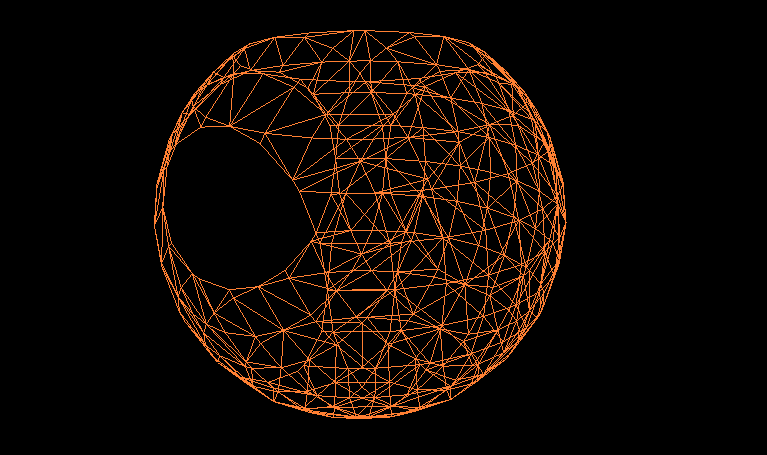

This is done by commit bde64e4. There seems still to be a bug with some huge triangles in the rendering; I’ll debug that later.

I notice that the MyGLFW class does a bit too much and has essentially two separate responsibilities:

Wrap the GLFW state and ensure the window is properly closed in the destructor

Provide synchronization between the main loop and the UI window

To have a clearer (and thereby probably more robust) program, I’ve split the class into two classes (MyGLFW and renderer). This is done in a8a27e3.

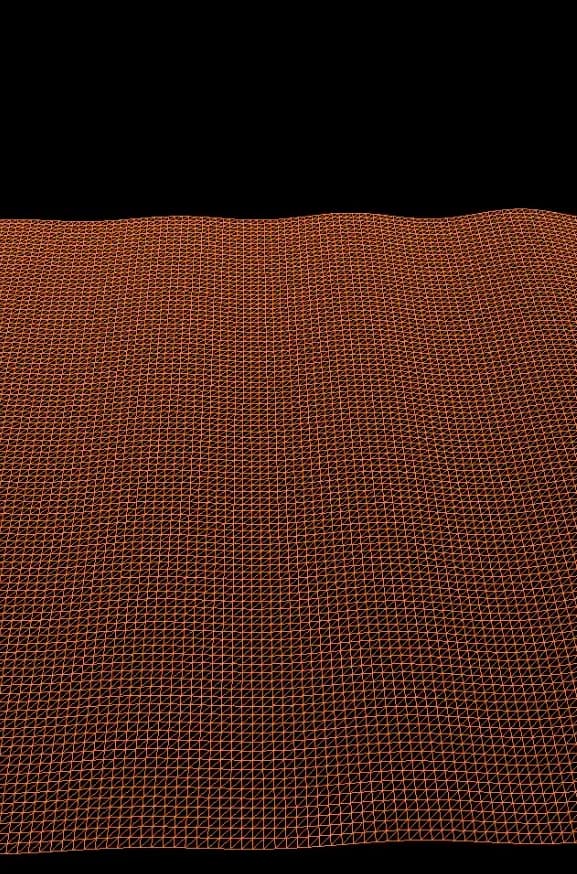

Now that the UI and simulation can run in parallel, let’s add some more complicated boundary conditions, such as a sine. Some considerations to take into account:

We can vary the wave speed $v$ and the sine period. To view an interesting sine, I need a high sine period, a high grid size, and a low wave speed. Probably I’ll need to take into account the CFL condition later.

For debugging, I can set a small grid size, replace the while loop back with a for loop, and print the grid for the first few iterations. Prior to printing the grid (which is in GPU memory but will be mostly copied on the CPU), the program needs to call

cudaDeviceSynchronize. (I found that out through this doc, which wisely states “We all make this mistake once".)I can also add an option to not use CUDA, to can distinguish between CUDA usage errors and logic errors (see commit 2cb856f)

With 49f7293, the grid size also becomes variable — this goes against what I said previously about having “the container implicitly contain its size”, but it’s a pretty convenient feature. (Since that is no longer a reason, could we use an std container? No — there’s no 2D vector in the STD. There’s a vector of vectors, but it wouldn’t be in continuous memory. So our solution is still the most elegant.)

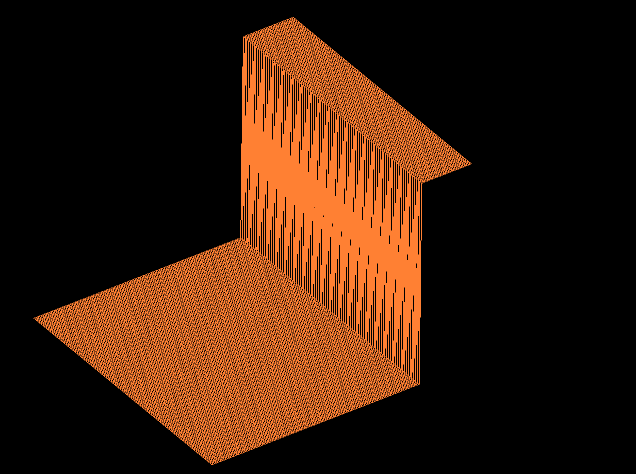

With f356508, the boundary condition becomes a sine wave, so we can see the simulation actually doing something.

This brings us to the remaining tasks / directions to continue the program:

Fix the OpenGL bugs (rectangle getting outside the screen all the time, too large triangles, etc.)

Flesh out the simulation: use different schemes, different equations, different grids, and different boundary conditions

Learn more about CUDA by adding more “difficult” parallelization, e.g. the grids and thread blocks clusters mentioned in the intro. Right now we only use one CUDA thread (AFAIK). This is sufficient, but the goal of this project is to learn about CUDA, so we should use something more complex than that. We can artificially create the need for more complex structures by using more complex grids (e.g. split up the grid into different domains).